Whoa! Seriously? Okay, hear me out—DeFi feels like the Wild West sometimes. My first impression was: sign, send, pray. Hmm… that gut feeling of “somethin’ ain’t right” is common. But that instinct can be turned into a superpower if you use the right toolset.

Let me be blunt. Most wallets show balances and let you approve transactions. They don’t show you what might happen under the hood. That’s a big problem. Smart contracts are deterministic, but the environment around them isn’t—gas, mempool dynamics, front-running bots, failing require statements. So the practical question is: how do you interact with complex contracts while minimizing surprises? Transaction simulation.

At first I thought simulation was just for devs. Actually, wait—let me rephrase that. I thought it was niche, reserved for engineers who run testnets and write unit tests. Then I watched a small trade lose 20% of its expected outcome because of a reentrancy-like check and a token fee. Ouch. That moment changed how I approach DeFi interactions.

Simulation is simple in concept. You replay the transaction in an environment that mirrors mainnet state and observe the result without broadcasting. But the devil lives in the details. The simulation needs to replicate block state, contract storage, and tx ordering—otherwise you get false confidence. On one hand, a quick simulation can flag immediate reverts. On the other hand, some edge cases—like interactions across multiple contracts that change state between blocks—can still surprise you, though actually you can design around many of those.

Here’s what bugs me about the current UX. Many wallets bury simulation behind toggles, or they offer a binary “simulate/skip” choice with little context. Users want clarity: will this tx revert? Will I be front-run? How much slippage will I actually pay when gas spikes? They want answers, not just a green check. I’m biased, but the right interface should give layered insights: exact revert traces, estimated gas breakdown, and a simulation of post-execution balances. That level of feedback changes behavior.

Check this out—simulation isn’t only about “did it revert?” It’s about risk discovery. For instance, a simulated transaction might succeed in isolation but cause a token transfer to a contract that triggers a later require, because another token hook runs on transfer. You’d never catch that by eyeballing the ABI. So you simulate the full call graph and get a trace. Then you can decide to split the transaction, increase gas, or pick a different route.

How transaction simulation changes smart contract interaction

Initially I thought “simulation = safety net” and that was enough. But then I realized it’s also an optimization tool. Seriously. With a precise simulation you can choose a gas strategy that avoids mempool squeezes. You can predict slippage and set better limits. My instinct said speed wins, but sometimes patience combined with precise gas pricing gives a better effective price, especially on DEXs with time-weighted liquidity changes.

On-chain state is messy. There are flash loans, bots, and stale liquidity pools. A robust simulator will snapshot the chain state at the exact block and then run the call with identical preconditions. If it reports an unexpected balance change or a high gas refund, you can re-evaluate. Hmm… sometimes the simulation surface-level says “success” while the trace shows a costly approve() being called repeatedly because a token contract lacks allowance checks. That’s the kind of thing that can eat gains slowly, very very slowly.

If you’re interacting with complex DeFi primitives—lending pools, vaults, multipliers, or leverage—you should simulate every multi-step action. For example: a repay-then-withdraw sequence might pass locally but fail when attempted on mainnet because an intermediary hook modifies the deposit index mid-execution. On one hand, simulation gives you the visibility to catch that. On the other hand, there are limits: off-chain price oracles and cross-chain finality can still introduce variance. That said, simulation reduces the unknowns dramatically.

Tools matter. Not all simulation is equal. Some simulators only run basic EVM traces; others emulate mempool behavior and pending transactions. The best ones stitch together RPC call history, decode events into user-friendly messages, and show you the expected post-transaction portfolio. That’s where wallets started to earn trust.

Okay, so practical tips. First, always run a simulation before approving a contract call you haven’t used before. Seriously. Second, check the call trace—look for external calls, token transfers, and any revert reasons. Third, use the simulation output to calibrate slippage and maxFeePerGas settings. And fourth, if you’re doing multi-contract flows, simulate every step as a single atomic batch when possible. If that fails, break it into smaller truth-checked chunks.

Now, about UX again—users need plain language. Instead of seeing “CALL succeeded”, they’d get “This call will transfer X tokens to Contract Y, which then calls Contract Z and may trigger a fee of A%.” That kind of description matters. It reduces mistakes. It also helps new DeFi users learn contract behavior without reading Solidity.

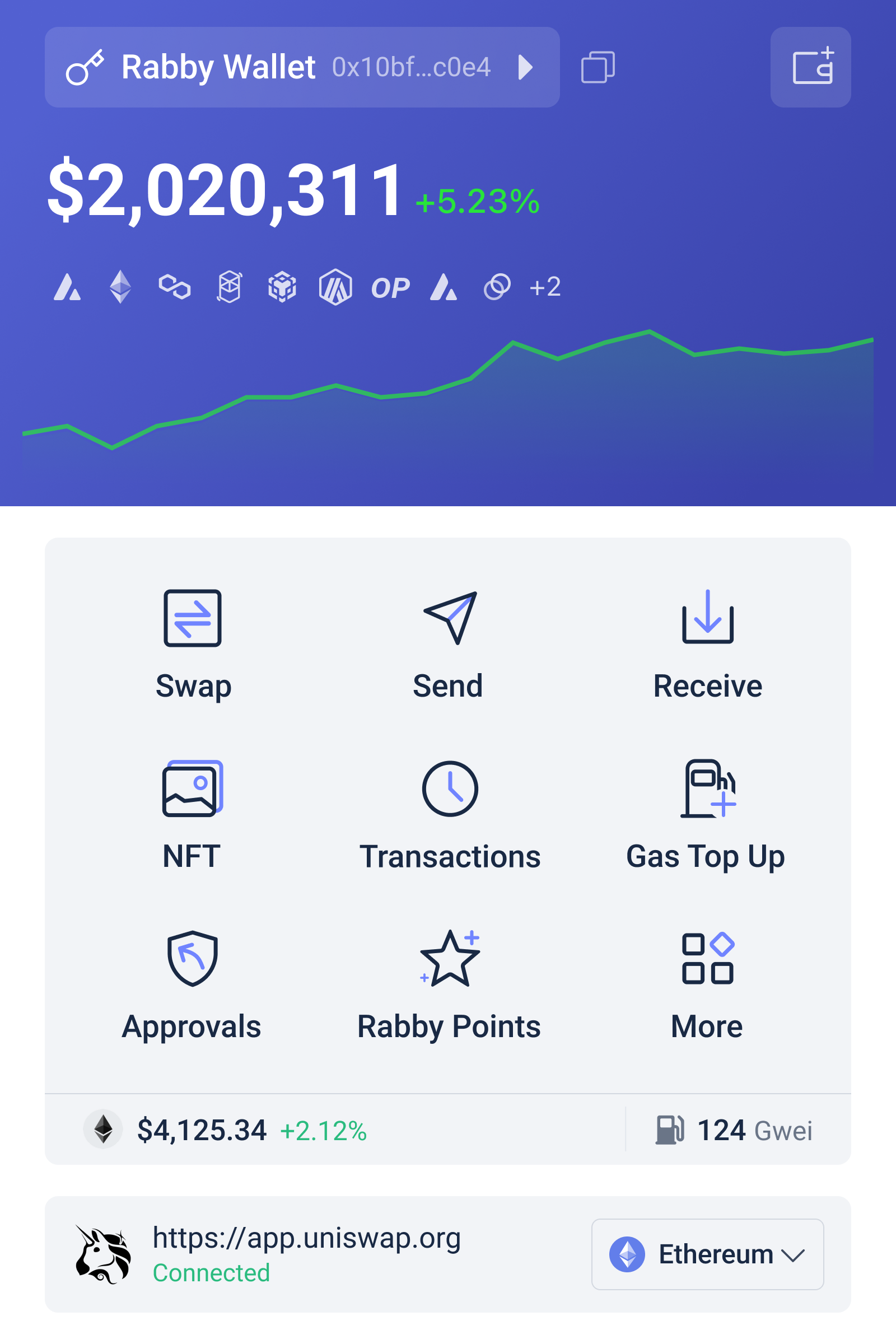

Which brings me to one practical recommendation: if you want simulation built into your everyday wallet experience, try a wallet that emphasizes developer-grade tooling while staying user-friendly. I’ve found that integrating simulation into the send/approve flow, where it runs automatically and flags anomalies, is a huge productivity boost. One such option that does this well is rabby wallet. It’s designed with transaction simulation and granular permission controls, so you get both safety and control in a single place.

I’ll be honest—no tool is perfect. I’m not 100% sure any simulator can capture every mempool manipulation possible. There are edge-case attack vectors that rely on timing and chain reorganizations that are hard to fully emulate. But the marginal benefit of running simulations before interacting with novel contracts is enormous. It turns luck-based trading into evidence-based operations.

Here’s a short checklist for using transaction simulation effectively:

- Run a full call trace and read event logs.

- Look for unexpected transfers or external calls.

- Estimate final token balances, not just success/failure.

- Calibrate slippage and gas using the simulation’s numbers.

- Re-simulate after any mempool or liquidity change.

Some quick scenarios where simulation saved me money. Once I routed a swap through a DEX aggregator and simulation revealed that one path had a token with an onTransfer fee. That path would have looked cheaper in price but netted less after the hidden fee. Simulation flagged it. Boom—chose another path. Another time a leverage action would have reverted due to supply cap reasons in a lending protocol that I’d never noticed; simulation showed the exact revert reason so I avoided a failed tx fee. These are small wins, but they add up.

On the technical side, good simulators reconstruct the EVM environment including pending nonces, gas price estimations, and recent block logs. They often use a combination of public RPCs and archived state nodes. If they can replay transactions against an accurate archival state, you get more reliable results. But not every wallet has access to archival nodes due to cost. That’s a trade-off: decentralization and cost vs. fidelity. Still, it’s better to have approximate simulation than none at all.

Something else that bugs me: permission fatigue. Approve() calls with infinite allowances still proliferate. Simulation can highlight allowance changes and their scope—like which contracts get access and for how much. That should be front and center in approval flows. I’m biased toward wallets that let you set granular permissions and simulate the eventual token flow before granting approvals.

There are also composability risks. When you interact with complex vaults and yield strategies, a single approved contract might execute dozens of back-end calls that affect other positions. The right simulator will list those downstream interactions. You’ll then decide whether to approve, simulate a smaller test, or use a safeguard like a timelocked multisig to gate large operations. It’s about adding friction where the risk is highest and removing it where it’s low.

Okay—small tangent (oh, and by the way…): decentralization purists sometimes argue you shouldn’t presuppose safety via tooling; you should audit every contract. Sure, audits help. But audits plus simulation plus cautious UX is the pragmatically safer path for everyday users. Audits are static; simulation is dynamic. Together they reduce both known and unknown failure modes.

FAQ — Quick practical answers

Q: Will simulation prevent all failed transactions?

A: No. It reduces the chances dramatically, but it can’t predict chain reorganizations or certain MEV behaviors perfectly. Still, it catches the majority of common failure modes and many costly surprises.

Q: How often should I simulate?

A: Always for unfamiliar contracts or complex flows. For routine sends to known addresses, you can be less strict—but if value is significant, simulate anyway. My rule: simulate when I’m not 100% sure, and definitely when the transaction involves approvals or multi-contract steps.

Wrapping up, simulation moves you from reactive to proactive. It’s not a panacea, but it’s the most accessible lever to improve on-chain interactions today. I started skeptical, then simulated once, and that result stuck with me. It changed my behavior. I’m still learning. Some parts bug me—like flaky RPCs and imperfect state—but overall simulation is the lever every advanced DeFi user should pull. Try it. Test it. Then you’ll see why it matters.